Click the above image to view the finished application and to download for free.

Bruzed asked me to make an interactive music video for his audio track Deserve that “would melt people’s faces.”

I came up with the idea of a running herd of creatures.

Around the same time, I came across 21 Panoramic Photos That Went Horribly Wrong.

The image below felt like it might be a good fit for making people slightly uncomfortable.

I sketched out how the body might look and the structure of the bones.

Then I modeled the lil weird half dog in Blender, rigged him up and animated a few walk/run cycles.

I needed a way to control animations that were baked into a 3D model and thought FBX would be a good format, because if Unity uses it, it must be pretty decent.

Took me a while to complete the ofxFBX addon and have it ready for deployment. I was finally able to read in the 3d model with animations in OpenFrameworks!

The skipping video below didn’t quite capture the feel that we were looking for. A bit too happy and care free.

There couldn’t just be one of these things, there needed to be enough to make the viewer feel anxious. The herd should be fleeing at a neurotically fast pace, based on the audio. I thought the Gallimimus scene from Jurassic Park had the right feel, so I used that for inspiration.

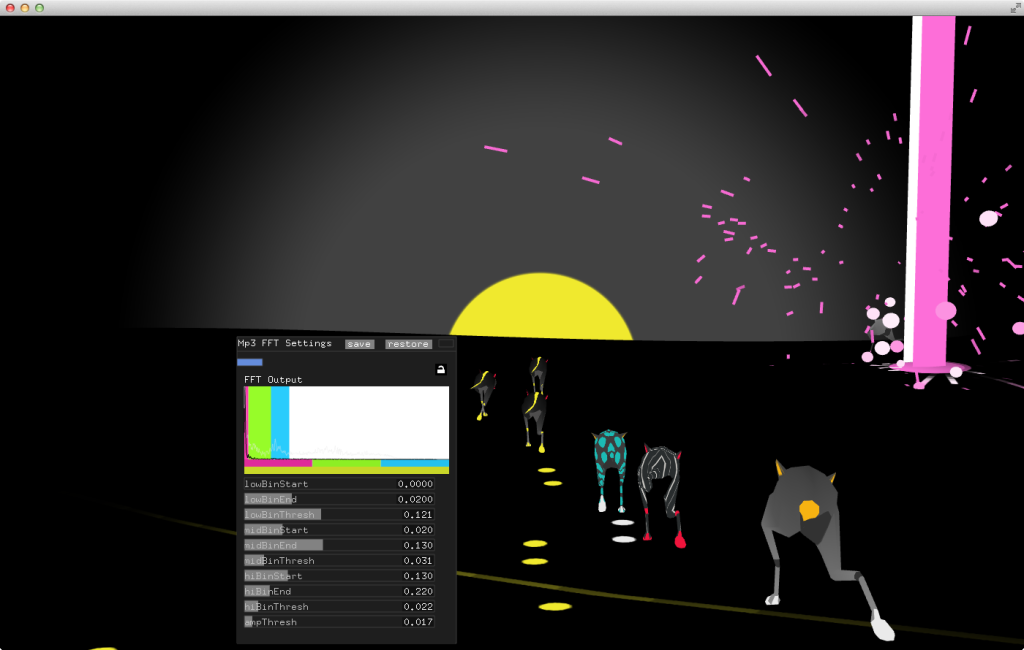

Below is a debug shot of the herd with the flocking parameters.

Audio Input

The application responds to both the pre-recorded Deserve track and live audio using FFT analysis. There are two app modes: Deserve and live input. The Deserve mode plays the Deserve track, reacts to the input audio and has more features like the head and brain. I made a simple event system based on xml to trigger main events at certain times in the audio. For example, switch from the dog scene to the head scene at a certain time. The live input mode reacts to any audio input and displays the weird dog things running in endless desperation.

Well if there is a herd of weird dog things chaotically running through lightening strikes, there should be an ominous head that gets deconstructed to the audio. Right? Right.

I thought it would be more responsive to have the head controlled entirely via code. So I rigged it up with enough bones to control expressions.

It was time consuming to control everything via code, but felt really responsive and satisfying that I could control everything dynamically. If the head is being deconstructed, then his eyes should definitely pop out of his head. And then it should break apart entirely. Below is a video of my first attempts implementing the face. The eyes and mouth are controlled by the mouse. I used ofxBullet for the physics of the head. You can see the debug bullet shapes at the end of the video below.

Ok so his eyes pop out of his head. They should shoot lasers. Definitely shoot lasers.

But the head broke apart and then there was nothing. That just won’t do. Ah yes his brain is in there. Lets add a brain, but it should have fungi looking stuff that reacts to the audio all over it.

Post a Comment